Front-line workers are leaving at high rates, and a significant share change jobs within a year. By the time a supervisor notices pulled-back body language or shorter shift chatter, the resignation is usually already drafted. Predictive workforce analytics gives HR directors and operations leaders a way to spot flight risks weeks earlier, when a retention conversation still has a chance of working.

The seven steps below cover what separates programs that reduce attrition from those that produce unused dashboards.

TL;DR

- Start with one specific retention problem you can measure, not a broad data goal

- Consolidate historical workforce data across HRIS, payroll, and timekeeping before building models

- Pilot in your highest-turnover department first, not a low-stakes area

- Build employee trust through governance: aggregation rules, plain-language policies, and limits on how scores are used

- Train shift supervisors and store managers to act on risk flags, not just read dashboards

- SMS-based platforms like Yourco help close the frontline data gap by reaching every worker where they already communicate

1. Define Clear, Measurable Objectives Before Touching Data

Programs that start with a vague mandate to "use data better" consistently fail. The most common failure mode is fixating on a metric without tying it to a business outcome. Higher engagement scores do not automatically translate into lower turnover, and treating them as interchangeable leaves HR teams with a program that gets built but never used.

Before purchasing software or pulling reports, define one bounded, high-cost problem. For frontline organizations, a strong starting point is first-year retention, since the first year is the hardest period for holding onto hourly workers.

Build your business case around these baseline metrics:

- Cost-per-departure for the target role. A simple replacement-cost estimate gives leadership a clear financial baseline.

- First-year voluntary attrition by role and location. This is the most actionable starting metric for frontline organizations.

- Named business owner who will act on outputs. Without an operational decision-maker assigned, analytics programs produce dashboards that no one is accountable for acting on.

Spend the first phase on problem definition and stakeholder alignment before any modeling work begins.

2. Consolidate High-Quality Data Across HRIS, Payroll, and Timekeeping

People analytics programs fail at the data layer far more often than the model layer. The HR.com State of People Analytics report confirms that many HR professionals say their systems are not well integrated for analysis, and pulling data from multiple systems remains one of the hardest parts of the work. The challenge is steeper for frontline organizations, where administrative and hourly workforces typically run on separate systems.

To produce reliable predictions, build from consistent historical records across your core systems before analysis begins. Here is the priority order for system integration:

- HRIS and payroll (highest priority): Employee master data, tenure, compensation, and hours worked from your system of record

- Timekeeping (high priority): Attendance patterns, overtime, and shift adherence are the highest-signal frontline-specific data sources

- Engagement data: Survey responses, pulse feedback, and SMS communication sentiment fill the gap that email-based methods miss with frontline workers

If you rely on SMS-based feedback or pulse checks, employee surveys can help capture frontline input through a channel workers already use. That channel is important: 93% of HR leaders say improved communication would improve non-desk employee retention, according to a Yourco-commissioned survey of 150 HR leaders. Prioritize integrating these systems before any modeling work begins.

3. Pilot in One High-Impact Area First

A bounded pilot both validates model accuracy and builds organizational trust. Run the pilot in a high-impact area, such as your highest-turnover shift or location, rather than a low-stakes environment. A pilot in a low-turnover area generates an insufficient signal and fails to demonstrate business value.

Plan for a defined pilot window across design, execution, and evaluation. During the pilot, track these metrics:

- Model precision: Of the workers the model flagged as flight risks, how many actually quit?

- Model recall: What percentage of departing workers did the model flag?

- Manager action rate: How fast do managers turn risk flags into documented retention conversations?

- Pilot-period attrition versus baseline: Compare the pilot window against the same period last year or a matched control group.

The Experian case study shows the payoff: predictive turnover modeling helped reduce global attrition and save $14 million over two years. Expect usable results only after enough time has passed to see whether flagged employees actually stay or leave.

4. Build Transparency and Trust Around Employee Data Use

Frontline workers have good reasons to distrust analytics. Warehouses, hotels, and retail chains have used performance analytics to track quotas, accelerate the pace of work, and inform disciplinary decisions, creating a real trust deficit that any predictive program now inherits.

Trust requires governance, not just communication. A common practice among HR analytics teams is to aggregate predictive data to the team or site level before sharing it with managers, keeping individual risk scores within HR.

Put these five governance commitments in place before launching any predictive program:

- Plain-language data use policy explaining what is collected, how it is used, and what decisions it does not influence

- Explicit prohibition on using predictive outputs for discipline or termination without independent human review

- Aggregation rules prevent individual-level risk scores from reaching direct managers

- Documented worker rights to access, correct, and understand their data

- Frontline worker representatives involved in pilot design, including shift leads, not just HR

Track survey response rates as a leading indicator of trust. If participation drops in the weeks after a predictive program launches, the cause is usually that workers don't trust how their answers will be used, not survey fatigue.

5. Train Frontline Managers to Interpret and Act on Analytics

When predictive programs stall, the model is usually blamed, but the breakdown often occurs at a more basic level: a manager receives a risk flag and does not know what to do with it. MIT Sloan Review traces this to familiar root causes: treating analytics as a technology project instead of a business initiative, user resistance to new workflows, and leaders who fund initiatives without actively championing them.

Whether a risk flag leads to action depends almost entirely on the shift supervisor or store manager who receives it. Structure training in tiers:

- Tier 1 (frontline workers): What data is collected, what it means, and what rights they have

- Tier 2 (shift supervisors and store managers): How to read a risk dashboard, how to run a stay interview, what to do when a flag fires on a high performer, and how to avoid over-relying on the score

- Tier 3 (HR and analytics team): Model validation, bias detection, and governance oversight

Once the tiers are in place, measure manager confidence in interpreting outputs before and after training, and track how often a risk flag leads to a retention conversation within 30 days. The share of flags acted on within that window is the clearest adoption metric available.

6. Run What-If Scenarios to Prepare for Workforce Volatility

Leading organizations are moving beyond forecasting who might leave. They are using scenario modeling to prepare for demand swings and talent gaps before they escalate into attrition.

The highest-impact scenarios for most frontline organizations fall into two categories. The first is volume and seasonal planning: if demand spikes for a short period, which sites will need proactive staffing buffers based on historical patterns?

The second is career-path simulation: which roles have the slowest paths to promotion, and how does opening up internal mobility change retention? Mercer research identifies clear, AI-informed skills pathways as a key factor in retaining frontline employees.

Peer-reviewed evidence supports the connection between schedule stability and business performance. A randomized controlled trial at Gap Inc., published in Management Science, found that predictable scheduling increased store productivity and sales, giving leaders causal evidence to justify scheduling investments.

7. Refine Models Continuously Using Precision and Recall Metrics

Precision and recall are the right ongoing evaluation metrics for any turnover model. A model predicting no one will leave can look highly accurate while delivering zero practical value.

Bias detection is not one-time either. NIST SP 1270 establishes that bias identification and management are continuous processes across the AI lifecycle.

This information is for general awareness only. For specific compliance guidance, consult with qualified legal professionals.

Set a regular review schedule from day one. Every month or quarter, review precision, recall, and false positive rates. Retrain the model with new data and check whether the factors driving predictions have shifted. Once a year, run a full model audit that includes a disparate impact analysis across demographic groups.

Organizations with mature people analytics programs consistently see lower attrition and higher business productivity than low-maturity peers. Those results come from sustained investment in model quality and organizational adoption, rather than from one-time software purchases.

Turn Frontline Communication Into Workforce Intelligence With Yourco

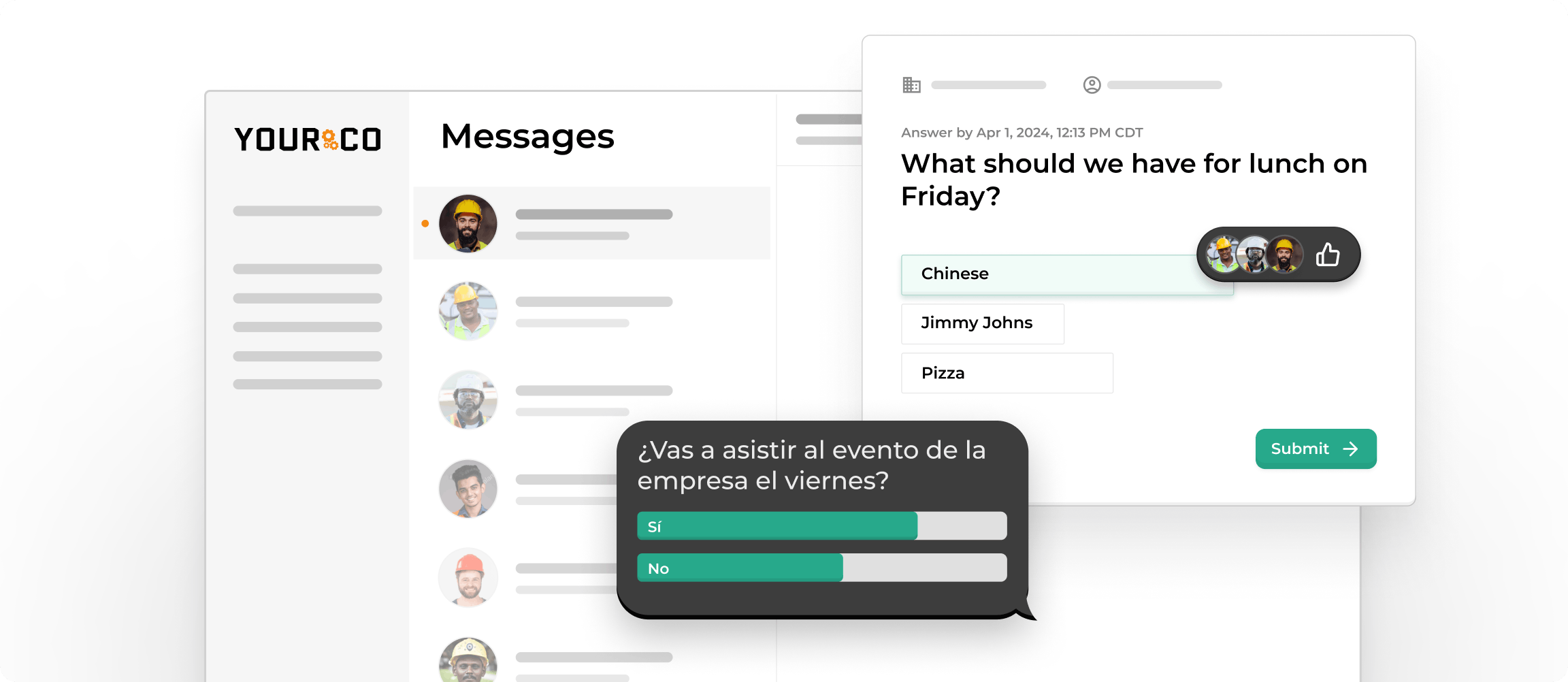

Turnover models depend on the quality of the signals feeding them, and many frontline signals sit in channels HR analytics never reach, including missed-shift texts, short pulse replies, and photos sent from the floor. Yourco helps close that frontline data gap by turning everyday SMS exchanges into structured workforce signals. According to a Yourco-commissioned survey of 150 HR leaders, 88% say better communication tools can reduce employee churn.

- SMS to any phone with no app download and no internet connection, working on basic flip phones and smartphones alike

- Two-way messaging so employees respond, report absences, and share feedback directly by text

- AI-powered translation across 135+ languages and dialects, enabling inclusive data collection from multilingual teams

Yourco integrates with 240+ HRIS and payroll systems, keeping employee data synchronized across platforms and reducing the fragmentation that undermines analytics programs.

Enterprise Bridge lets corporate leadership send centralized, one-way announcements across all locations while local managers maintain direct communication with their teams.

Frontline Intelligence provides HR and operations teams with centralized visibility into engagement trends, sentiment, and attendance signals across all sites. It consolidates poll results, survey responses, and daily SMS communications to surface frontline disengagement before it becomes attrition. Leaders can query call-off patterns, absence causes, and sentiment shifts by location, giving corporate teams the earliest possible read on where turnover risk is building.

"Yourco has helped to change the way we communicate at McCarthy Auto Group. We have nearly 700 employees and 80% are non-desk based, communication is a challenge. Yourco provides a quick easy way to reach everyone within our organization and a secure way for employees to reach HR and leadership without a computer."

— Felisha Parker, VP Human Resources, McCarthy Auto Group

After 90 days on Yourco, companies see two-way employee engagement reach 86%.

Try Yourco for free today, or schedule a demo to see the difference the right workplace communication solution can make for your company.

Frequently Asked Questions About Predictive Workforce Analytics

What is predictive workforce analytics?

Predictive workforce analytics uses historical employee data to forecast outcomes such as turnover risk, staffing gaps, and absenteeism patterns. For frontline organizations, it usually means combining HRIS, payroll, timekeeping, and engagement signals to flag workers who look likely to leave in the next 30 to 90 days.

How much historical data do I need before modeling turnover?

Most teams get useful signals from 18 to 24 months of consistent historical data covering tenure, hours, attendance, and voluntary exits. Shorter windows can still work for pilots, but expect more noise. Data quality across systems matters more than the absolute number of fields you collect.

How long before a predictive analytics program shows ROI?

A well-scoped pilot usually produces a measurable retention signal within six to twelve months, and full program ROI typically surfaces between twelve and eighteen months. Programs move faster when manager adoption is strong and slower when HRIS, payroll, and timekeeping systems are still being connected.

Should individual risk scores be shared with direct managers?

Common practice is to share aggregated team or site-level trends with direct managers, while keeping individual risk scores with HR. That split gives managers enough signal to act on without turning the model into a surveillance tool, which is the fastest way to lose employee trust and degrade the data feeding the model.

How is predictive workforce analytics different from standard HR reporting?

HR reporting tells you what has already happened, such as last quarter's turnover or last month's absences. Predictive analytics estimates what is likely to happen next based on patterns in that history. That shift from looking back to looking ahead is what gives HR time to act before a resignation.